Stochastic Gradient Descent

Stochastic gradient descent (SGD) is a popular optimization algorithm commonly used in machine learning. It is an iterative algorithm that seeks to find the minimum of a function by taking small steps in the direction of the negative gradient of the function. In other words, at each iteration of the algorithm, the parameters of the function are updated in the direction that reduces the function's value.

Stochastic gradient descent is a simple and very efficient approach to fit linear models. It is particularly useful when the number of samples is very large. It supports different loss functions and penalties for classification. Here is a tutorial that shows how to develop SGD from scratch.

Advantages: Efficiency and ease of implementation.

Disadvantages: Requires a number of hyper-parameters and it is sensitive to feature scaling.

Gradient Descent in Python

Here is a simple example of gradient descent in Python:

p = [1, 2, 3]

# define the function to be minimized

def my_func(p):

return (p[0]-4)**2 + (p[1]-5)**2 + (p[2]-6)**2

# define the gradient of the function

def grad(p):

return [2*(p[0]-4), 2*(p[1]-5), 2*(p[2]-6)]

# set the learning rate

lr = 0.01

# perform gradient descent for a number of iterations

for i in range(1000):

# calculate the gradient

g = grad(p)

# update the parameters

p[0] = p[0] - lr * g[0]

p[1] = p[1] - lr * g[1]

p[2] = p[2] - lr * g[2]

# print the final parameters

print(p)

In this example, we define a simple function to be minimized and the gradient, and then use gradient descent to find the minimum of the function by updating the parameters in the direction of the negative gradient. After 1000 iterations, the final values of the parameters is close to the minimum of the function. There is additional information on other algorithms such as conjugate gradient, Newton's method, and steepest descent that use gradient (derivative) information to find an optimal solution.

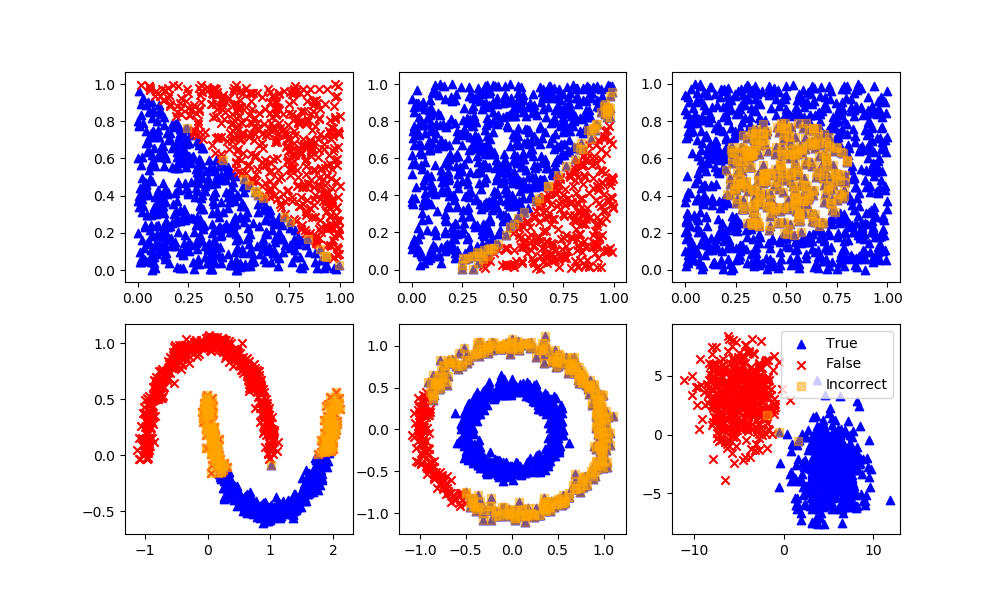

Stochastic Gradient Descent in Python

In stochastic gradient descent observations are chosen randomly from the training set. This trains on a random subset of the training set between iterations. Here is a tutorial with a complete example of SGD from scratch. Python also has an SGD classification algorithm in the scikit-learn package.

sgd = SGDClassifier(loss='modified_huber',

shuffle=True,

random_state=101)

sgd.fit(XA,yA)

yP = sgd.predict(XB)

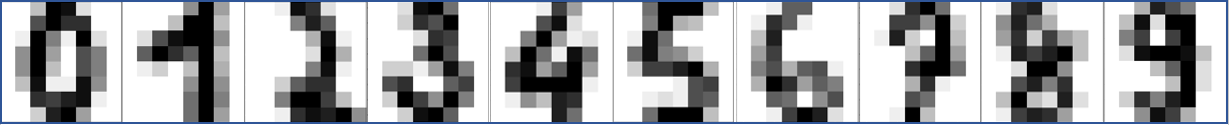

Optical Character Recognition with SGD in Python

Optical character recognition (OCR) is a method used to convert images of text into machine-readable text. It is typically used to process scanned documents and recognize the text in the images. Stochastic gradient descent (SGD) is a method for training a machine learning model, such as a neural network, by iteratively updating the model's parameters to minimize a loss function. In the context of OCR, SGD can used to train a neural network to recognize characters in images of text.

Here is an example of using SGD as a solver to train an OCR neural network model in Python:

import numpy as np

from sklearn.datasets import load_digits

from sklearn.neural_network import MLPClassifier

from sklearn.model_selection import train_test_split

# Load the dataset of images of handwritten digits

digits = load_digits()

# Split the dataset into training and testing sets

X_train, X_test, y_train, y_test = train_test_split(digits.data, digits.target, random_state=0)

# Create a neural network classifier

clf = MLPClassifier(max_iter=1000, tol=1e-3,

solver='sgd', random_state=0)

# Train the model using the training set

clf.fit(X_train, y_train)

# Evaluate the model performance on the test set

accuracy = clf.score(X_test, y_test)

print("Accuracy: %0.2f" % accuracy)

In this example, we use the scikit-learn library to load the dataset of images of handwritten digits, split the dataset into training and testing sets, and train a neural network classifier using SGD. We then evaluate the model's performance on the test set by computing the accuracy, which is the proportion of test images that the model correctly identifies.

SGD scikit-learn Classifier

SGD is also a stand-alone classifier in scikit-learn, not just as a solver for other methods. Below is an example of OCR with the SGDClassifier.

from sklearn.model_selection import train_test_split

import matplotlib.pyplot as plt

import numpy as np

from sklearn.linear_model import SGDClassifier

classifier = SGDClassifier(loss='modified_huber',

shuffle=True,

random_state=101)

# The digits dataset

digits = datasets.load_digits()

n_samples = len(digits.images)

data = digits.images.reshape((n_samples, -1))

# Split into train and test subsets (50% each)

X_train, X_test, y_train, y_test = train_test_split(

data, digits.target, test_size=0.5, shuffle=False)

# Learn the digits on the first half of the digits

classifier.fit(X_train, y_train)

# Test on second half of data

n = np.random.randint(int(n_samples/2),n_samples)

print('Predicted: ' + str(classifier.predict(digits.data[n:n+1])[0]))

# Show number

plt.imshow(digits.images[n], cmap=plt.cm.gray_r, interpolation='nearest')

plt.show()

MATLAB Live Script

Additional Information

- Singh, J. Implementing SGD From Scratch, Towards Data Science, Article Link.

✅ Knowledge Check

1. What is the primary purpose of Stochastic Gradient Descent (SGD)?

- Incorrect. The primary purpose of SGD is to minimize a function. It works by updating the function's parameters in the direction that reduces its value.

- Incorrect. SGD seeks to find the minimum, not the maximum, of a function. The steps it takes are based on the negative gradient of the function, not random directions.

- Correct. SGD is an iterative algorithm that seeks to find the minimum of a function by taking small steps in the direction of the negative gradient.

- Incorrect. SGD is an optimization algorithm that can be used to train both linear and non-linear models. Its primary advantage is efficiency, especially with large datasets.

2. Which of the following statements about SGD is NOT true?

- Incorrect. This statement is true. SGD is efficient and especially useful when dealing with large datasets.

- Correct. This statement is false. One of the disadvantages of SGD is that it requires a number of hyper-parameters, such as the learning rate.

- Incorrect. This statement is true. SGD trains on a random subset of the training set between iterations, which differentiates it from standard gradient descent.

- Incorrect. This statement is true. One of the characteristics of SGD is its sensitivity to feature scaling, which is why preprocessing and normalization of features can be crucial when using this algorithm.

Return to Classification Overview