XGBoost Regressor

XGBoost is an open-source software library that provides a gradient boosting framework for C++, Python, R, Java, Julia, and many other languages. It is designed to be efficient, flexible, and portable, and it has become one of the most popular machine learning libraries in the world.

In the context of regression, XGBoost is a type of supervised learning algorithm that can be used to make predictions on continuous numerical data. It is an implementation of the gradient boosting machine learning algorithm, which is a type of ensemble learning method that combines the predictions of multiple weaker models to create a stronger, more accurate model.

XGBoost works by building an ensemble of decision trees, where each tree is trained to make predictions based on a subset of the available data. The trees are grown sequentially, with each tree learning from the mistakes of the previous tree. The final prediction is made by taking the average of the predictions from all of the trees in the ensemble.

One of the key advantages of XGBoost is its ability to handle missing data and large datasets efficiently. It also has a number of hyperparameters that can be tuned to improve model performance, including the learning rate, depth of the trees, and regularization parameters.

Overall, XGBoost is a powerful and widely-used tool for regression tasks, and it has been applied successfully to a variety of real-world problems such as predictive modeling, time series forecasting, and customer churn prediction.

Advantages: Effective with large data sets. Tree algorithms such as XGBoost and Random Forest do not need normalized features and work well if the data is nonlinear, non-monotonic, or with segregated clusters.

Disadvantages: Tree algorithms such as XGBoost and Random Forest can over-fit the data, especially if the trees are too deep with noisy data.

xgbc = xgb.XGBRegressor()

xgbc.fit(XA,yA)

yP = xgbc.predict(XB)

from sklearn.datasets import make_regression

from sklearn.metrics import r2_score

from sklearn.model_selection import train_test_split

import pandas as pd

X, y = make_regression(n_samples=1000, n_features=10, n_informative=8)

Xa,Xb,ya,yb = train_test_split(X, y, test_size=0.2, shuffle=True)

xgbr = xgb.XGBRegressor()

xgbr.fit(Xa,ya)

yp = xgbr.predict(Xb)

acc = r2_score(yb,yp)

print('R2='+str(acc))

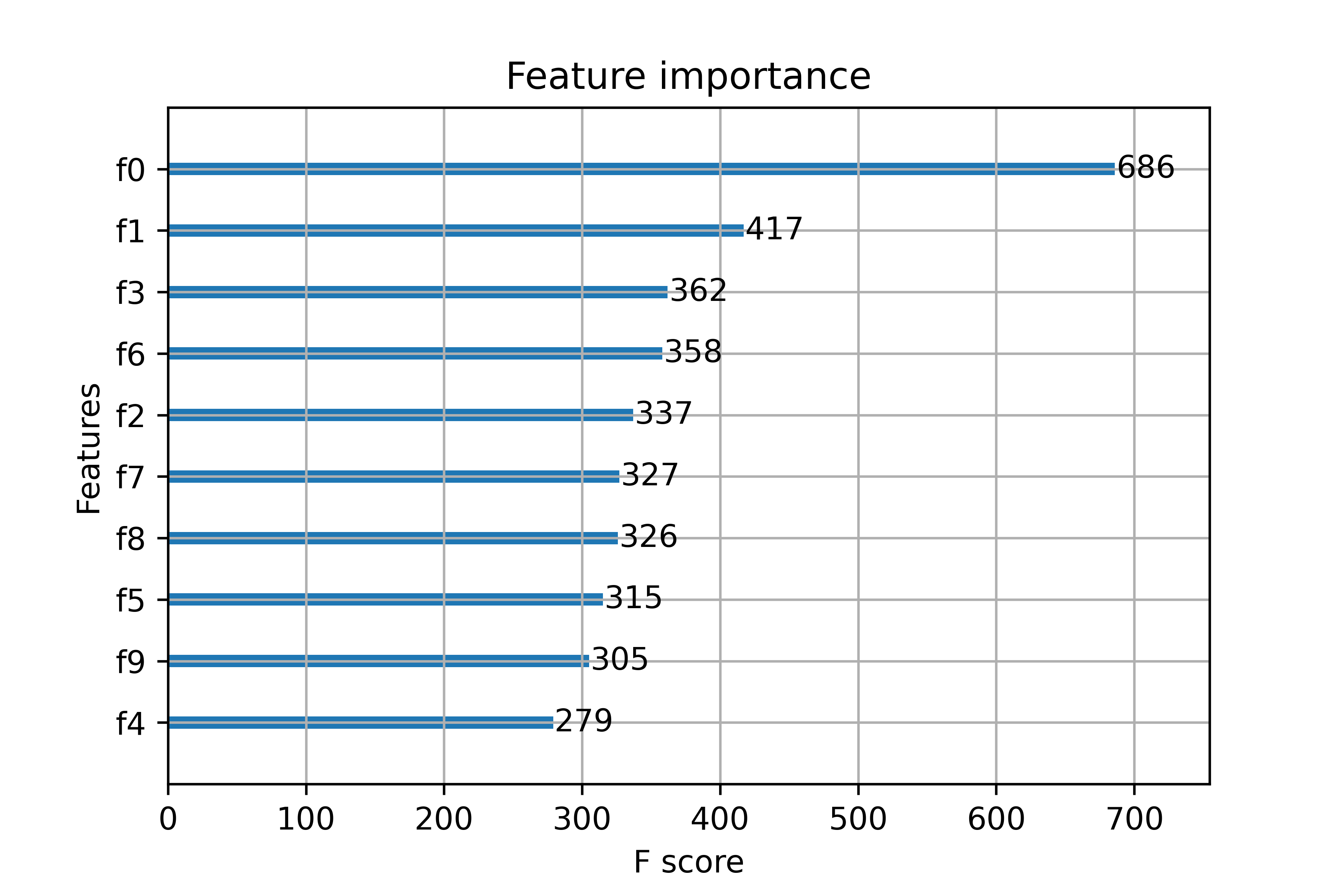

# importance_types = ['weight', 'gain', 'cover', 'total_gain', 'total_cover']

f = xgbr.get_booster().get_score(importance_type='gain')

t = pd.DataFrame(f.items(),columns=['Feature','Gain'])

print(t.sort_values('Gain',ascending=False))

# create table from feature_importances_

fi = xgbr.feature_importances_

n = ['Feature '+str(i) for i in range(10)]

d = pd.DataFrame({'Feature':n,'Importance':fi})

print(d.sort_values('Importance',ascending=False))

# plot the importance by 'weight'

xgb.plot_importance(xgbr)

R2 = 0.796

MATLAB Live Script

Reference

Abu-Rmileh, A., The Multiple faces of ‘Feature importance’ in XGBoost, Towards Data Science, Feb 2019.

See also XGBoost Classifier