Hand Tracking

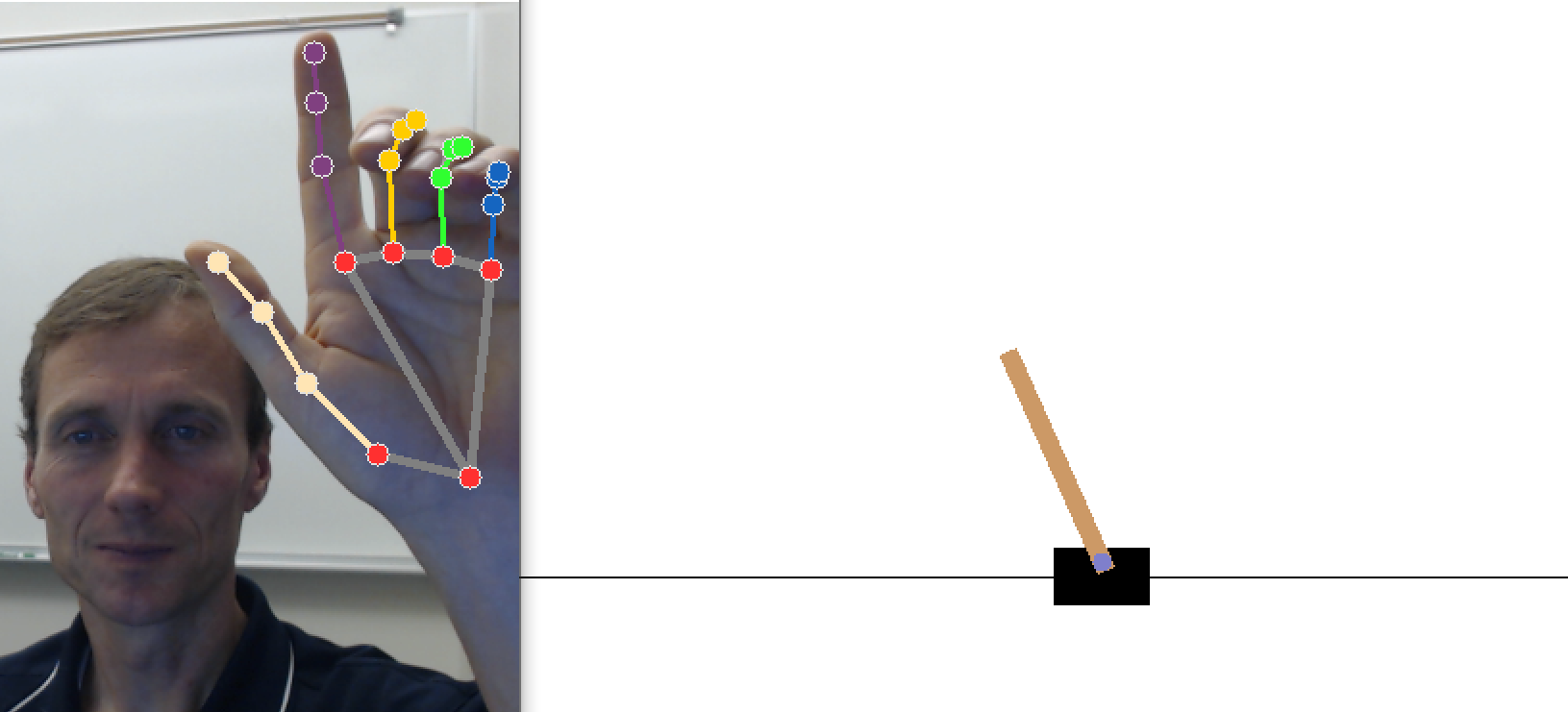

Objective: Use MediaPipe Hands to balance a pole on a cart and control an LED.

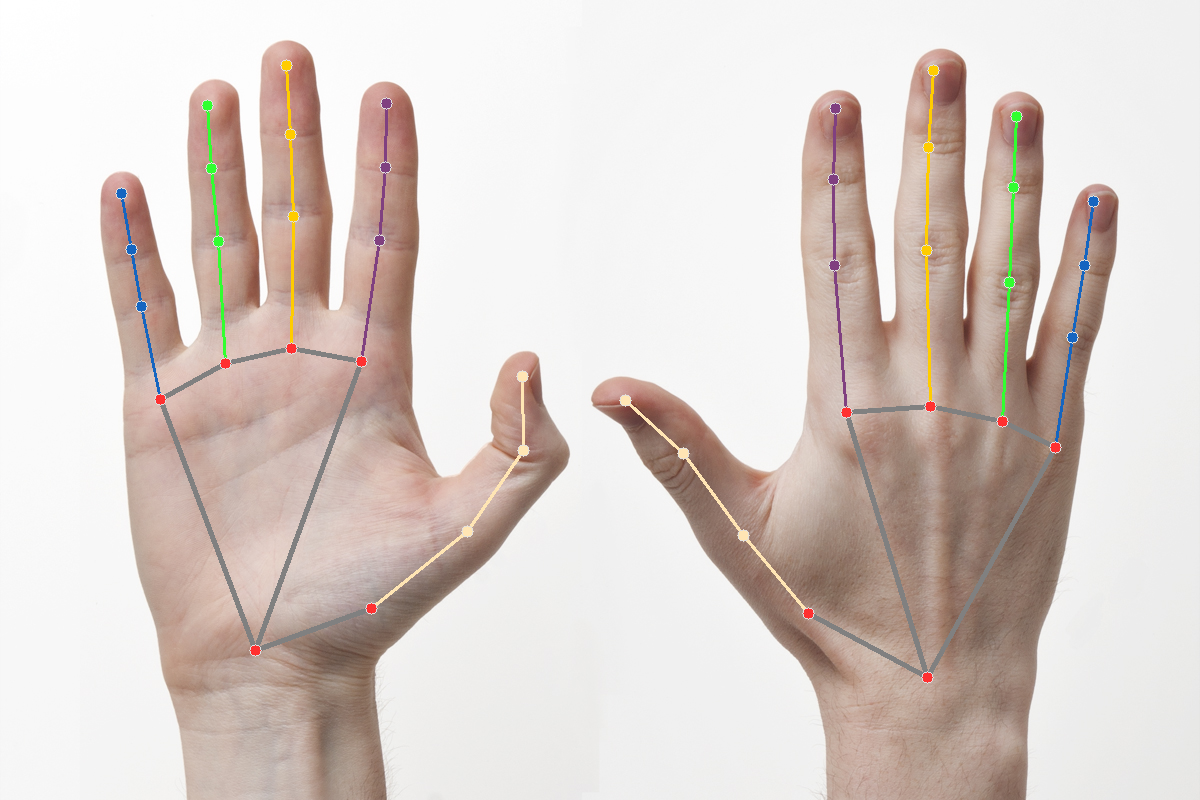

MediaPipe detects the shape and motion of hands (see Online Demo). Hand gestures can be the basis for computer control of simulated or physical objects.

The purpose of this exercise is to demonstrate the use of hand detection for control of a simulated cart pole and a physical LED.

import cv2

import mediapipe as mp

import os

import urllib.request

mp_drawing = mp.solutions.drawing_utils

mp_drawing_styles = mp.solutions.drawing_styles

mp_hands = mp.solutions.hands

# download image as hands.jpg

url = 'http://apmonitor.com/pds/uploads/Main/hands.jpg'

urllib.request.urlretrieve(url, 'hands.jpg')

IMAGE_FILES = ['hands.jpg']

with mp_hands.Hands(

static_image_mode=True,

max_num_hands=2,

min_detection_confidence=0.5) as hands:

for idx, file in enumerate(IMAGE_FILES):

image = cv2.flip(cv2.imread(file), 1)

# Convert the BGR image to RGB before processing.

results = hands.process(cv2.cvtColor(image, \

cv2.COLOR_BGR2RGB))

# Print handedness and draw hand landmarks on the image.

print('Handedness:', results.multi_handedness)

if not results.multi_hand_landmarks:

continue

image_height, image_width, _ = image.shape

annotated_image = image.copy()

for hand_landmarks in results.multi_hand_landmarks:

tip = mp_hands.HandLandmark.INDEX_FINGER_TIP

print('hand_landmarks:', hand_landmarks)

print(

f'Index finger tip coordinates: (',

f'{hand_landmarks.landmark[tip].x * image_width}, '

f'{hand_landmarks.landmark[tip].y * image_height})'

)

mp_drawing.draw_landmarks(

annotated_image,

hand_landmarks,

mp_hands.HAND_CONNECTIONS,

mp_drawing_styles.get_default_hand_landmarks_style(),

mp_drawing_styles.get_default_hand_connections_style())

cv2.imwrite('annotated_image' + str(idx) + '.png', \

cv2.flip(annotated_image, 1))

# Draw hand world landmarks.

if not results.multi_hand_world_landmarks:

continue

for hand_world_landmarks in results.multi_hand_world_landmarks:

mp_drawing.plot_landmarks(

hand_world_landmarks, \

mp_hands.HAND_CONNECTIONS, azimuth=5)

Getting Started

There are a few required packages for these exercises and can be installed with pip. These include OpenCV, MediaPipe from Google, and Farama Foundation.

pip install mediapipe pyglet pygame

pip install gymnasium

The exercises also require a webcam for video input and the Temperature Control Lab for LED control.

Cart Pole

A pole is attached by a frictionless joint and cart that moves along a track. The cart is controlled by applying a force to the left (action=0) or right (action=1). The pendulum starts upright and a reward of +1 is provided for every timestep that the pole remains upright. The gym returns done=True when the pole is more than 15° from vertical or the cart is more than 2.4 units from the starting location.

env = gym.make("CartPole-v1",render_mode='human')

observation = env.reset()

for _ in range(100):

env.render()

# Input:

# Force to the cart with actions: 0=left, 1=right

# Returns:

# obs = cart position, cart velocity, pole angle, rot rate

# reward = +1 for every timestep

# done = True when abs(angle)>15 or abs(cart pos)>2.4

action = env.action_space.sample() # random action

observation, reward, done, info, e = env.step(action)

if done:

observation = env.reset()

env.close()

Use the webcam input to control the force on a cart. Maintaining the inverted pendulum is very challenging with hand control. This gym exercise is a benchmark for Reinforcement Learning (RL) where algorithms successively improve based on experience. As a first step, practice moving your hand to center the cart position. As a next step, try to stop the pendulum motion while it is facing down. As a final step, try to balance the pendulum.

# run as a script from the terminal

import cv2

import mediapipe as mp

import os

import gymnasium as gym

import random

import warnings

import time

env = gym.make("CartPole-v1",render_mode='rgb_array')

observation = env.reset()

warnings.filterwarnings("ignore", category=UserWarning)

mp_drawing = mp.solutions.drawing_utils

mp_drawing_styles = mp.solutions.drawing_styles

mp_hands = mp.solutions.hands

# Webcam input

error_count = 0

cap = cv2.VideoCapture(0)

with mp_hands.Hands(model_complexity=0,min_detection_confidence=0.5,

min_tracking_confidence=0.5) as hands:

while cap.isOpened():

success, image = cap.read()

if image is None:

time.sleep(0.1)

error_count += 1

if error_count<100:

continue

else:

print('Error reading from webcam')

break

else:

error_count = 0

image.flags.writeable = False

image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

results = hands.process(image)

# Draw the hand annotations on the image.

image.flags.writeable = True

image = cv2.cvtColor(image, cv2.COLOR_RGB2BGR)

hand_landmarks = None

if results.multi_hand_landmarks:

for hand_landmarks in results.multi_hand_landmarks:

mp_drawing.draw_landmarks(

image,

hand_landmarks,

mp_hands.HAND_CONNECTIONS,

mp_drawing_styles.get_default_hand_landmarks_style(),

mp_drawing_styles.get_default_hand_connections_style())

# Flip the image horizontally for a selfie-view display.

image2 = cv2.flip(image, 1)

# Resize image

width = 800

height = int(image.shape[0]*(width/image2.shape[1]))

dim = (width,height)

image2 = cv2.resize(image2, dim)

if hand_landmarks:

h, w, _ = image.shape

t = mp_hands.HandLandmark.INDEX_FINGER_TIP

x = hand_landmarks.landmark[t].x

y = hand_landmarks.landmark[t].y

else:

x = 0.5

# determine action

r = random.random()

if x<0.3:

action = 1

elif x>0.7:

action = 0

else:

if r>=x:

action = 1

else:

action = 0

# add slider to webcam image

pt1 = (int(400-(x-0.5)*700),20)

pt2 = (400,20)

color = (int(x*255),int((1-x)*255),255)

cv2.line(image2, pt1, pt2, color, 10)

cart = env.render()

# take bottom 2/3 of image

cart = cart[int(cart.shape[0]/3):]

height = int(cart.shape[0] * (image2.shape[1]/cart.shape[1]))

dim = (image2.shape[1], height)

cart = cv2.resize(cart, dim)

# show image

image3 = cv2.vconcat([cart,image2])

cv2.imshow('Hands - CartPole', image3)

if cv2.waitKey(5) & 0xFF == 27:

break

# send action

observation, reward, done, info, e = env.step(action)

if abs(observation[0])>3:

observation = env.reset()

env.close()

cap.release()

Exercise

Adapt the webcam hand tracking script to control the TCLab LED. Adjust the TCLab brightness up and down as the index fingertip moves up and down. Adjust the blinking rate as the index fingertip moves to the left and right.

import time

a = tclab.TCLab()

# Blink LED 10 times

for i in range(10):

a.LED(100) # LED On, 100%

time.sleep(0.5)

a.LED(0) # LED Off

time.sleep(0.5)

a.close()

import mediapipe as mp

import os

import tclab

import random

import time

mp_drawing = mp.solutions.drawing_utils

mp_drawing_styles = mp.solutions.drawing_styles

mp_hands = mp.solutions.hands

lab = tclab.TCLab()

def blink(lab,pause,bright):

lab.LED(bright)

time.sleep(pause)

lab.LED(0)

return

# Webcam input

cap = cv2.VideoCapture(0)

with mp_hands.Hands(

model_complexity=0,

min_detection_confidence=0.5,

min_tracking_confidence=0.5) as hands:

while cap.isOpened():

success, image = cap.read()

image.flags.writeable = False

image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

results = hands.process(image)

# Draw the hand annotations on the image.

image.flags.writeable = True

image = cv2.cvtColor(image, cv2.COLOR_RGB2BGR)

hand_landmarks = None

if results.multi_hand_landmarks:

for hand_landmarks in results.multi_hand_landmarks:

mp_drawing.draw_landmarks(

image,

hand_landmarks,

mp_hands.HAND_CONNECTIONS,

mp_drawing_styles.get_default_hand_landmarks_style(),

mp_drawing_styles.get_default_hand_connections_style())

# Flip the image horizontally for a selfie-view display.

image2 = cv2.flip(image, 1)

cv2.imshow('MediaPipe Hands', image2)

if cv2.waitKey(5) & 0xFF == 27:

break

if hand_landmarks:

h, w, _ = image.shape

t = mp_hands.HandLandmark.INDEX_FINGER_TIP

x = hand_landmarks.landmark[t].x

y = hand_landmarks.landmark[t].y

else:

x = y = 0.5

pause = max(0,min(0.5,(1-x)*0.5))

bright = max(0,min(50,(1-y)*50))

blink(lab,pause,bright)

lab.close()

cap.release()